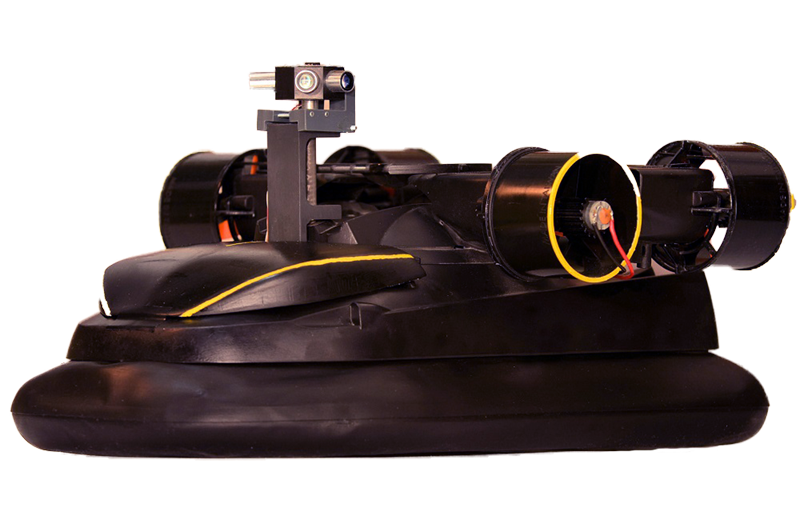

The LORA Hovercraft Robot

The LORA hovercraft robot embodies the visual guidance strategy of the bee in the horizontal plane based on optical flow. The miniature hovercraft LORA is fully powered (size: 36 x 21 x 18 cm, mass: 0.878 kg), which allows it to navigate in a tunnel whose geometric configuration is unknown to it. The development of this autopilot, called LORA (Lateral Optic Flow Regulation Autopilot), follows behavioral studies conducted on the honeybee. These behavioral experiments have shown that bees regulate the optical flow to see and avoid walls.

The LORA autopilot is a dual lateral optical flow regulator. It relies on two interdependent visual-motor loops, each with an optical flow setpoint that controls a degree of freedom of the robot. The first loop is a bilateral optical flow regulator controlling the robot’s forward speed, while the second is a unilateral optical flow regulator controlling the lateral position relative to the walls. The keystone of this bio-inspired guidance system is a third loop intended to maintain the course. This is based on the measurement of a micro-gyrometer and a magnetic micro-compass allowing the hovercraft to move purely in translation during the measurement of the optical flow.

The robot LORA is equipped with a minimalist biomimetic compound eye, consisting of two or four sensors locally detecting the optical flow according to the experiments and also called Elementary Motion Detectors (DEM). LORA’s visual system comprises only 4 or 8 pixels, with each pair of adjacent pixels forming a sensor detecting optical flow locally. This parsimonious visual system is sufficient for the autopilot to control the speed/distance ratio to the walls, which by definition represents the optical flow of translation, while jointly controlling the speed and the lateral position of the robot, without having to measure or estimate these two parameters.

The LORA hovercraft robot is thus able to cross without collision tunnels of various shapes: straight, tapered, with a slope, a curve, a lack of texture on a wall or even a non-stationary zone. It automatically adjusts its speed of advance and its lateral position with respect to the wall in the manner of bees. This bio-inspired visual strategy not only provides an elegant, unknown tunnel navigation solution for fully actuated robots, but also helps explain how a 100 mg bee can navigate with so few computational resources without resorting to conventional avionics such as sonar, radar, lidar, or GNSS technologies (GPS, Galileo, BeiDou, GLONASS).

Publications

- Serres, J. R., & Ruffier, F. (2015). Biomimetic autopilot based on minimalistic motion vision for navigating along corridors comprising U-shaped and S-shaped turns. Journal of Bionic Engineering, 12(1), 47-60.

DOI: 10.1016/S1672-6529(14)60099-8 - Roubieu, F. L., Serres, J. R., Colonnier, F., Franceschini, N., Viollet, S., & Ruffier, F. (2014). A biomimetic vision-based hovercraft accounts for bees’ complex behaviour in various corridors. Bioinspiration & biomimetics, 9(3), 036003.

DOI: 10.1088/1748-3182/9/3/036003 - Roubieu, F. L., Serres, J. R., Franceschini, N., Ruffier, F., & Viollet, S. (2012). A fully-autonomous hovercraft inspired by bees: Wall following and speed control in straight and tapered corridors. In 2012 IEEE International Conference on Robotics and Biomimetics (ROBIO) (pp. 1311-1318). IEEE.

11-14 December 2012, Guangzhou, China

DOI: 10.1109/ROBIO.2012.6491150 - Roubieu, F. L., Serres, J. R., Viollet, S., Ruffier, F., & Franceschini, N. (2011). Toward a fully autonomous hovercraft visually guided thanks to its own bio-inspired motion sensors. In International Workshop on Bio-inspired Robots.

6-8 April 2011, Nantes, France